https://github.com/jessipedia/chat_proj

Class: Educate the Future

Fall 2016

Interactive Telecommunications Program

Overview

This course has asked you to evaluate the need for higher education. You have observed current problems, solutions and imagined new ones for Higher Education, 1 year, 5 years, 10 years into the future. How will people learn? How will teachers teach? How will you measure your academic success? How will students connect to peers and experts? Who will be able to attend this future? Will higher ed be on your wrist or in a building? Will education be gamified? This documentation of your final reaches to answer some of these course objectives.

Research / Backstory

“Globalization is a proxy for technology-powered capitalism, which tends to reward fewer and fewer members of society.”

-Om Malik

I am interested in continuing education for adults. I think that this is an overlooked area now, and that the need for more prevalent and comprehensive continuing education will only grow as more jobs become automatable.

Although the number of jobs threatened by automation is poised to grow massively in the near future, 47% of the jobs in the US are at risk of automation, this is hardly a problem for futurists. This is a problem for the now.

I’m an upper middle class design and technology student living in New York City. This is not an issue that is going to affect me right away, if it affects me substantially at all. The careers I am training for are projected to be pretty safe from future automation. My economic position sets me up for all kinds of social blind spots where job loss and economic vulnerability are concerned, but it remains an issue I feel deeply about.

I grew up in Rochester, NY home of Eastman Kodak. The imaging company still exists, but to say it’s a shell of its former self does not begin to describe the transformation of the company. Kodak has gone from being a tech giant to a glorified Kinkos. I was very young when things really started falling apart, but I remember watching that company die. The local news was constantly reporting waves and waves of layoffs. I listened to my parents talk in worried tones about which of their friends and acquaintances got their “pink slip”. Local churches started support groups for people dealing with the emotional toll of job loss. Managers with kids in High School started over as cashiers at the local supermarket. In fifteen years about 27,000 people lost their jobs.

But that’s not the part that stays with me, it’s this: today 33% of the people who live in the city of Rochester live in poverty. Household incomes across Monroe County have fallen, even in affluent towns and neighborhoods. By many metrics the area is well past the point of ever being able to recover. When Kodak died the city took a blow it can never come back from.

Similar stories have been playing out in factory towns across the country for some time now, but something that always strikes me about Kodak’s story is that it wasn’t limited to blue collar workers. Waves of job loss hit people seemingly indiscriminately. That is the kind of future increased automation may have in store for us. Education is hardly the only answer, but making sure that people have constant access to new education and information seems like a strong place to start.

How Technology Is Destroying Jobs

Baxter: The Blue-Collar Robot

SILICON VALLEY HAS AN EMPATHY VACUUM

Will Your Job Be Done By A Machine?

Elon Musk: Robots will take your jobs, government will have to pay your wage

Bill Gates on the Future of Employment (It’s Not Pretty)

The Future of Employment Report

How the Recession Upskilled Your Job

Labor Market Recovers Unevenly

Benchmarking Rochester’s Poverty

In Kodak’s town, life after layoffs

Problems

My original research problem, “Lack of accessible, well made continuing education resources for adults who need to re-skill”, was broad enough to be incorrect. Not all adults lack well made continuing ed. Some people reskill just fine. Breaking this down ended up creating more questions:

- How can we help learners assess their own skills when they are looking to enter a new industry?

- How can we create quality, low cost material to help students learn new job skills?

- How can we make sure that continuing education resources are available to as many people as possible?

- How can we give as many students as possible access to great teachers?

- How can we help teachers reach more students?

- How do we assess what skills will be most valuable in the job market?

Design Challenge

I ended up picking “How can we help learners assess their own skills when they are looking to enter a new industry?” as my design question.

Interviews

I spoke with four people about their experiences moving into a new industry for this project. One was a woman who moved from marketing and PR to nursing, two were ITP students who left advertising to study for a creative career at ITP, and one was a woman who was trying to move from general non-profit management to arts non-profit management but had not yet managed to make the switch.

Common themes from the interviews were:

- The importance of having a plan. Not having a plan extends the uncomfortable period of not-knowing, makes people feel aimless, and that this is an insurmountable problem

- All most all of the people I spoke with seemed to know early on what kind of career change they needed to make, but did not realize that they knew.

- People faced a set of unknown unknowns, not realizing that the things they were truly passionate about could become a career

- The feeling of being alone on the journey

Design

The points that struck me the most from my interviews were: the fact that people seemed to know what they wanted to do, even if they didn’t realize that they knew and that people felt lonely while they were going through this process.

I decided to work on a solution that would address these two issues. I also decided to limit my audience to adults about 20-30 years old. That was who I had interviewed and who I would be doing my user testing with, so it made sense, but it also seemed like helping someone at this stage of their career might have more impact than people who are mid career. It’s easier for a 25 year old to change their direction than a 45 year old and, if you make a change when you’re younger you might save yourself decades of work misery.

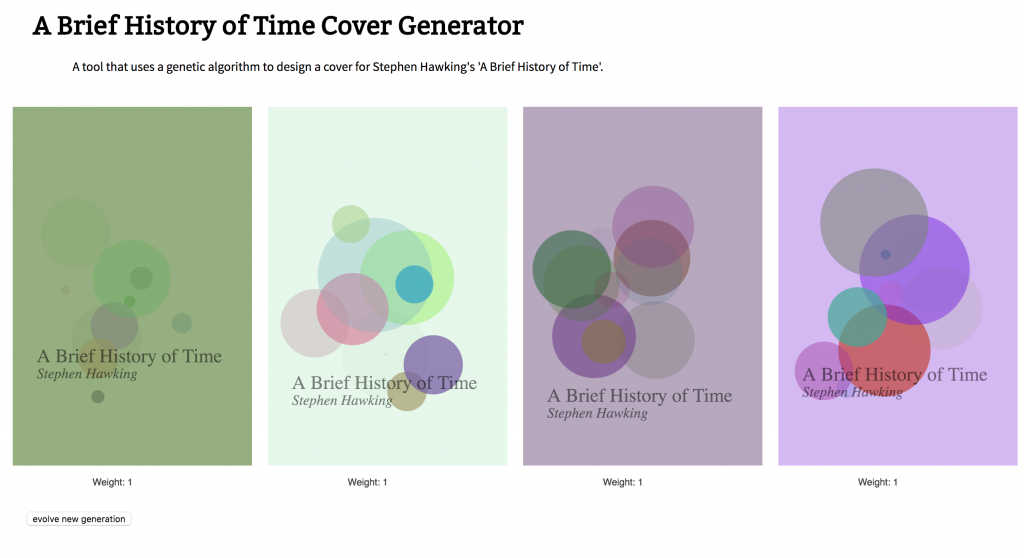

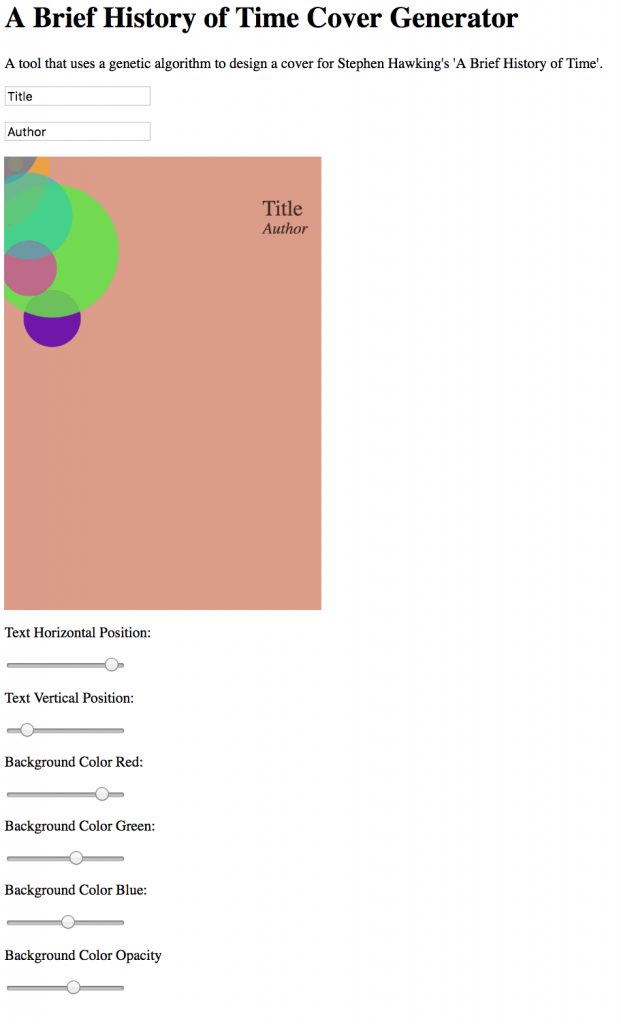

I ended up designing a chat bot that would prompt users with questions. Hopefully these questions would help people consider their career and realize that they knew what direction they needed to head off in. I hope that a chat interface also helps people feel less lonely. This is not a solution that would work for people disinclined to be introspective and honestly answer questions about themselves. I this kind of excercise you get out what you put in, so if you do not put much in you probably will not reap any insights.

When designing the bot I assumed that the programming itself didn’t actually have to be that complex or ‘lifelike’. I think that dumb AI is better, not for any anti-technology reason, but because it allows people to project more of themselves into the conversation. Also, I think that people are less frustrated when they can quickly understand the limitations of an interaction. I found ELIZA from 1966 to be an exellent source of inspiration.

User Testing

Insights from user testing:

- About half of the people tried to break the bot, which the bot was not able to sustain

- Many people wanted the bot to have more personality

- Many people liked that the bot did not have much personality

- People liked that they could review the chat history on their phones

- Kyle wanted to be emailed ‘results’ or ‘insights’ from his sessions

- Some people did not like the discussion model used in the bot, wanted something more like CBT

- People were a little confused at first about what the bot was

- People wanted the bot to be a general therapist, not just talk about career goals

Next Steps

More robust chat program – Everyone who I user tested the bot with tried to have an interaction with it that the bot couldn’t handle. This varied from asking it questions about itself, to outright trying to break it. I think that many people’s first instinct with a bot is going to be to try and break it, so being more ready for that is a must. Also, people were interested in the ‘character’ of the bot, which my test version was largely without. Adding in content about that bot’s ‘self’ would be good. It could also be a good way to give more information about the point of the project and set the tone for the kind of answers the bot is expecting.

From a more tech point of view, this bot barely works when it does work. It cannot adapt and doesn’t use websockets, so only one person can chat with it at once. A much more significant buildout is needed if ever expand the project.

The ability to suggest – Something that I got from my user testing is that people often don’t know what kinds of opportinities are available for them to persue thier interestes. I am hesitant to try and build a bot that simply tells someone what they should do with their lives, but one that can suggest popular books related to their topic of interest, or find meetups seems like it would help.

The ability to ask how things went – The main point of this project is to help people consider what they want from a job or career and to reflect. Checking in with people after they do something on their work-path, like going to a meeting or having an informational interview, seems like another strong opportunity to help people reflect.

The Future

This section is kind of an addendum to the project. This project had me considering the future of AI assistants. Most of the AI assistants we have now (Amazon Echo, Siri, Cortana, Google Assistant) are directed assistants, they only interact with you when you talk to them first. These kinds of directed assistants seem to be common in how we think of the future of AI assistants. However, I don’t think that these kinds of assistants help us be better humans.

An example from my presentation is Ask Jeeves vs. Literary Jeeves. The first give you answers when you ask it directly, but the second is interested in helping someone be their best selves. I think that we should be trying to design artificial assistants to help us be better people, not just all-knowing lazy people. At first glance this challenge seems like a technical challenge that calls for all kinds of fancy sentiment analysis and machine learning technology, but as the ELIZA project shows you can get a long way with simple technology and good writing.